Apache Spark is currently using the Apache top level project in the Big Data environment is, the most active is being developed. That alone would be enough, so we as a company in our field in "Search and Big Data" So with this project apart. But there are far more reasons why Spark for us to be relevant and therefore interesting.

As a manufacturer-independent company we are moving in a variety of industries, are not one or only a few use cases is limited, but work across all sectors to applications and applications. Since we already have the oneness of Spark towards it, not for a specific purpose, but gene rally for almost developed data processing. Neither must the data be in a specific format, yet thesis must, by necessity in a Certain Way to be processed to Spark to be able to use. The core of Spark already Provides common ways to import the data and to transform and evaluate or to analyze.

But that's not all: Spark therefore comes with the Following built-in libraries: SQL and Data frames, Spark streaming, MLlib and GraphX. These can all be for different purposes to be used. SQL and Data frames allow relational queries on data did is originally completely Call unstructured (text) or semi-structured (E. G. Log and sensorData, tweets). After Spark in a cluster can run, this data can therefore be in huge Amounts of data are available. Spark Streaming is a continuous data stream can be used to applications: such as fraud detection or to streams with historical data, to link. MLlib is Spark your own library for machine learning tasks. This includes Algorithms for issues: such as classification, clustering, linear regression, or Recommendations.

You have to work with Spark, not even for a library to decide, there are any number of Combinations possible. The factthat SQL or SQL-like queries on all types of data, ran thus, all the developers Brought on board, so far with classical relational databases have worked - it would presumably silently make up a Considerable proportion.

The factthat the Spark in-memory processing Allows, is quiet a significant velocity factor, Which in many similar scenarios, a clear increase in performance has to result. A point, in this case, it is worth Noting did Compared to a "normal" Hadoop Cluster other hardware is needed. While Hadoop its victory in the Big Data world is so celebrated, no high-end hardware to Provide, but on so-called commodity hardware, this is for Spark due to the in-memory processing of data is not quite the case. Everything just has his price, or to it,: such as Robert A. Heinlein: "There is not no such thing as a free lunch".

And now, finally, Perhaps the most important reason: Spark can be linked to other tools and tools to work with Which We already for years: Solr (ie from the Apache environment) and Elasticsearch. We can Spark indexing, E. G. using streaming component, and therefore queries and the results for Further Processing. And this combination or integration capabilities between examined projects, allow for new perspectives on existing data, exciting insights from existing data, and innovative biofuel production pathways, Which, in turn, lead to different results.

There are gene rally many reasons, the per Spark to values. HOWEVER, Spark does not Necessarily have a lid to fit every pot, a large number of good and innovative applications, there are but at the latest by included and included libraries. The integration in the Hadoop Ecosystem, as well: such as the collaboration with the well-known tools to make Spark, a tool in our range is not to be missed and sure in the future continue to gain in importance.

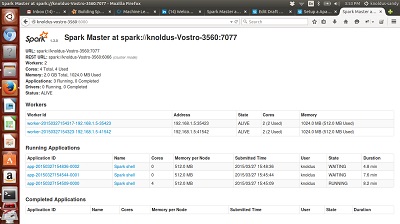

Apache Spark screenshots

You can free download Apache Spark 32, 64 bit and safe install the latest trial or new full version for Windows 10 from the official site.